Shadow AI Is Already in Your Organization — Here’s How to Find and Fix It

As of March 2024 we have renamed Apexchat to Blazeo. We are excited to share the next part of our journey with our customers and partners.

The name ApexChat implies that we are primarily a chat company, which is no longer true. Now we have many offerings, such as call center services, AI, Appointment setting, SMS Enablement, Market Automation, and Sales acceleration (Q2 2024), that go beyond chat. The new name will not only allow us to convey the breadth of our offering but will also better convey our company’s mission and values.

Blazeo, which is derived from the word Blaze, evokes a sense of passion, speed, and energy. A “Blaze” is captivating, illuminates, and represents explosive growth. Blazeo encapsulates our mission to ignite such growth for our customers and partners by delivering innovation with passion, speed, and energy.

Most companies think their AI risk begins when leadership approves a tool.

In reality, it usually begins months earlier.

A marketing manager uses an AI writing assistant to summarize customer interviews. A recruiter pastes candidate CVs into a public chatbot to draft feedback. A support rep runs transcripts through an AI summarizer to speed up replies. A salesperson uploads call notes into a browser-based AI tool to generate follow-ups.

None of these decisions go through procurement. None involve security reviews. And almost none are logged anywhere.

What is Shadow AI?

Shadow AI refers to the use of unauthorized or unmanaged artificial intelligence tools within an organization without formal IT approval, governance oversight, or compliance controls.

This is shadow AI — the quiet spread of unmanaged AI tools inside organizations — and it’s already happening whether leadership has approved AI adoption or not.

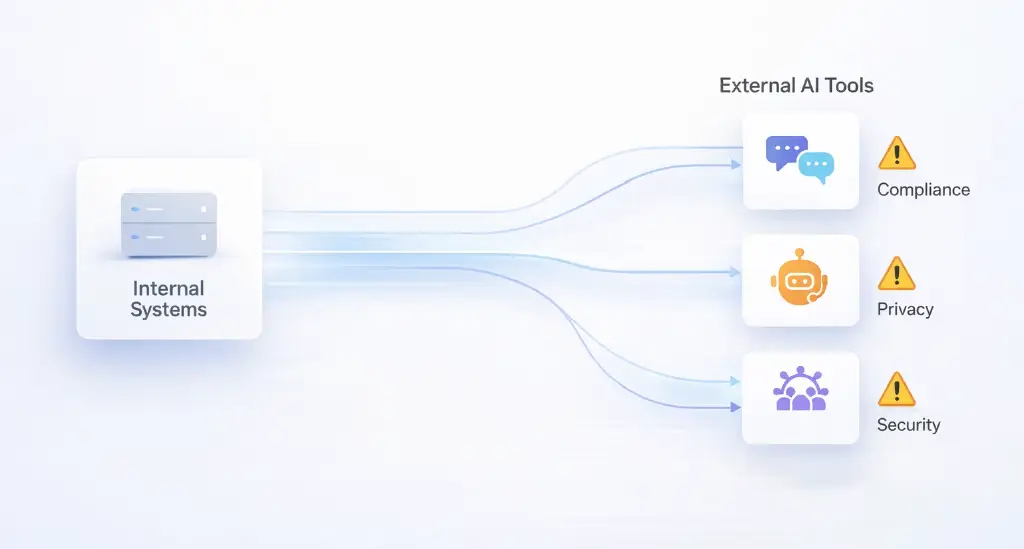

The real risk isn’t that employees are experimenting. It’s that they’re doing it without guardrails, without data visibility, and without governance. By the time companies begin discussing AI compliance frameworks, sensitive data has often already passed through tools they don’t control.

Understanding shadow AI means shifting the conversation from “Should we adopt AI?” to “How much AI are we already using without knowing it?”

Shadow AI behaves a lot like shadow IT did a decade ago, but with one important difference. The barrier to entry is far lower.

Employees don’t need to install software or request system access. Instead, a simple browser tab is all it takes. With a personal email address, access is granted in seconds. From there, sensitive information gets pasted directly into a prompt field. As a result, the entire workflow operates outside enterprise infrastructure.

What makes shadow AI especially dangerous is that it hides inside normal productivity improvements. People use it to move faster, not to break rules.

A product team trying to speed up documentation might feed internal roadmaps into an AI tool. A finance analyst might upload spreadsheet data to generate forecasts. A customer success manager might paste conversation logs to craft sensitive client responses.

From the employee’s perspective, these actions feel harmless. From a compliance perspective, they can introduce serious data privacy AI risks.

Unlike official enterprise software, most public AI tools don’t offer contractual guarantees around data storage, training usage, or jurisdictional compliance. That means confidential information may be retained, logged, or processed in ways the organization cannot audit.

The challenge is that shadow AI doesn’t announce itself. It spreads through convenience.

For many leaders, the initial instinct is to treat shadow AI as a productivity issue rather than a governance one. But unmanaged AI usage quickly becomes a compliance problem, particularly in industries dealing with customer data, financial information, or regulated communications.

One global consulting firm discovered this firsthand when an internal audit revealed employees had uploaded client strategy documents into public AI tools for summarization. Nothing malicious had occurred, but the firm had no way to confirm whether the data had been stored or reused. Legal teams suddenly had to assess exposure across dozens of client accounts.

Another well-known example involved engineers at a major tech company who pasted proprietary code into an AI assistant to debug it faster. The company responded by restricting external AI tools entirely until secure internal alternatives could be implemented.

| Category | Shadow AI | Governed AI |

|---|---|---|

| Approval Process | Used without IT or compliance approval | Approved and reviewed by IT and legal teams |

| Data Visibility | No centralized tracking of data flow | Full audit logs and traceability |

| Compliance Controls | Unclear or nonexistent | Built-in compliance and regulatory safeguards |

| Data Storage | Unknown retention and jurisdiction | Defined data storage policies |

| Vendor Contracts | No enterprise agreements | Enterprise-grade contractual protections |

| Security Standards | Varies by tool, often consumer-grade | Enterprise-level encryption and access controls |

| Audit Readiness | Cannot demonstrate governance | Full audit and reporting capability |

| Employee Behavior | Hidden experimentation | Structured and guided AI usage |

| Risk Level | High and unpredictable | Managed and measurable |

| Strategic Value | Short-term productivity gain | Long-term enterprise advantage |

These incidents illustrate the core issue: shadow AI introduces uncertainty. Compliance isn’t just about preventing breaches; it’s about maintaining control over where data flows and how it’s processed.

When organizations lack visibility into AI usage, they also lose the ability to demonstrate compliance. Regulations around data protection increasingly require not only secure handling but also auditable processes. If leadership can’t show how AI tools are governed, risk exposure grows quickly.

Conversational workflows amplify this problem even further.

Also read: The Hidden Cost of Fragmented Data in Business

Much of today’s shadow AI sits inside conversations. Employees feed transcripts, emails, chat logs, and customer notes into AI tools to summarize, classify, or respond.

This is where conversational AI compliance becomes critical.

Also read: Why Voice AI Is the Future of Customer Conversations

Customer conversations often contain personally identifiable information, financial details, or sensitive contextual data. When those conversations are processed in unmanaged tools, organizations risk violating data residency laws, consent requirements, and industry-specific privacy rules.

The danger isn’t always obvious. A support agent pasting a conversation into an AI tool may believe they’re only sharing text. But from a governance perspective, they’re transferring customer data outside the organization’s controlled systems.

Multiply this across hundreds of employees, and shadow AI becomes less of a fringe issue and more of a systemic enterprise AI risk.

The problem isn’t just the tools themselves. It’s the lack of centralized oversight.

Some organizations react to shadow AI by attempting to prohibit AI use entirely. On paper, this sounds responsible. In practice, it rarely works.

Employees continue using AI tools because the productivity gains are real. Prohibitions simply push usage further underground, making it even harder to monitor.

The more effective approach is to acknowledge that AI use is inevitable and shift focus toward governance.

This means building an environment where employees don’t need to rely on external tools because secure, compliant alternatives already exist internally.

When organizations provide centralized platforms designed for enterprise AI risk management, employees naturally migrate toward them. Convenience, after all, is what drove shadow AI in the first place.

The key is replacing uncontrolled experimentation with structured adoption.

Also read: Boost Conversion Rates Using AI: Increase Conversions by 30%

Finding shadow AI isn’t about catching employees doing something wrong. It’s about understanding how work is actually getting done.

The first signals usually appear in unexpected places. Teams mention AI outputs in meetings. Documentation quality suddenly improves. Email response times shorten. These are productivity indicators, but they’re also clues.

Security teams often discover shadow AI usage through network monitoring, where repeated access to public AI tools becomes visible. Compliance teams may encounter it during data audits when they notice customer information appearing in unexpected workflows.

The most reliable method, however, is cultural rather than technical. Organizations that openly ask employees how they’re using AI tend to uncover far more accurate information than those relying solely on monitoring.

When employees feel safe discussing their workflows, shadow AI stops being hidden behavior and becomes actionable insight.

Once visibility exists, governance can begin.

Managing shadow AI risk management doesn’t require banning AI. It requires visibility, structure, and governance. Here’s a practical framework:

Interview teams and review network activity to identify external AI tools currently in use.

Define what types of data (PII, financial, proprietary, customer conversations) can and cannot be processed by AI systems.

Review the data policies of any AI tools employees are using. Confirm data retention, model training usage, and regional compliance standards.

Create clear internal guidelines that define:

Provide employees with secure, enterprise-grade AI alternatives so they do not rely on unmanaged tools.

Educate teams on data privacy, compliance risk, and best practices to prevent future exposure.

The goal of AI governance isn’t to limit innovation. It’s to channel it into systems that protect both the organization and its customers.

Centralized AI platforms reduce risk because they create a controlled environment where data processing, storage, and access rules are defined in advance. Instead of employees relying on unknown third-party tools, they operate within approved infrastructure designed for compliance.

This shift changes the role of AI from a personal productivity hack to an enterprise capability.

Centralization also improves auditability. When conversations, documents, and AI outputs live inside governed systems, organizations gain traceability. They can show how data moves, who accessed it, and how it was used. This is what turns AI adoption from a compliance liability into a strategic advantage.

Most importantly, centralized platforms don’t just reduce risk; they improve consistency. Teams begin working with the same data context, the same automation layers, and the same governance standards. That consistency strengthens trust across both internal stakeholders and customers.

Effective AI governance doesn’t start with technology. It starts with clarity.

Organizations need to define what types of data can be processed by AI, which workflows require human oversight, and how AI outputs should be reviewed before reaching customers. Without this foundation, even the best platforms struggle to enforce compliance.

Training also matters more than most companies expect. Employees don’t need to become AI experts, but they do need to understand what information should never leave enterprise systems. When governance is framed as customer protection rather than restriction, adoption tends to improve.

The final step is ensuring that approved AI tools are genuinely useful. If official systems are slow, limited, or disconnected from daily workflows, shadow AI inevitably returns. Governance only works when compliant tools are also the most convenient ones.

Organizations that successfully address shadow AI often discover an unexpected outcome. Once AI usage becomes visible and structured, it stops being a hidden risk and starts becoming measurable intelligence.

Leaders gain insight into how conversations shape revenue. Compliance teams gain confidence in data handling processes. Employees gain tools that help them move faster without uncertainty.

Shadow AI isn’t inherently a failure of governance. It’s evidence that teams are already ready for AI. The challenge is turning that readiness into something secure and sustainable.

The companies that solve this problem first aren’t the ones that adopt AI fastest. They’re the ones that make it safest to use.

Shadow AI isn’t a future problem. It’s a present reality in most organizations, quietly shaping workflows long before leadership notices.

Ignoring it doesn’t eliminate the risk. Banning it doesn’t stop the behavior. The only real solution is visibility, governance, and centralized systems that give employees a better alternative than unmanaged tools.

When AI lives inside compliant, enterprise-ready infrastructure, organizations don’t just reduce risk. They gain control over how conversations, data, and automation drive outcomes.

That’s where platforms built specifically for governed, enterprise AI workflows come in.

Blazeo, for example, is designed to ensure conversational data, automation, and engagement tools live inside a single compliant system rather than scattered across disconnected AI tools. Instead of shadow workflows creating unseen exposure, everything from conversations to follow-ups becomes visible, auditable, and aligned with enterprise governance standards.

Because the goal isn’t to stop people from using AI.

It’s to make sure they’re using it somewhere safe.